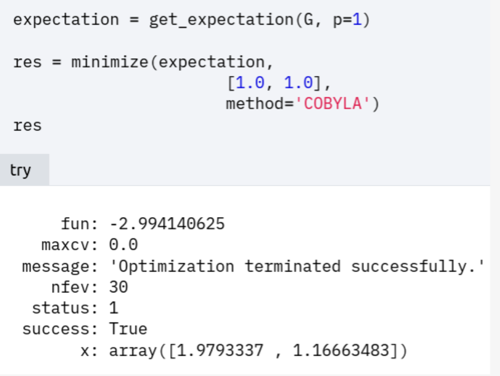

I am following the qiskit tutorial about using QAOA to solve maxcut. I have several questions about the optimizer:

1. Why is the initial point set to [1.0, 1.0]? A more general way should be random value, correct? Should we bound the random value?

2. Why does it use COBYLA optimizer? Which optimizer do people usually use for QAOA? I tried to use different optimizers and I got different fun and x and I don't know which is better...

3. How can we know if we obtain good enough or optimal parameters rather than stuck in barrean plateau?

4. In get_expectation , it uses qasm_simulator. If we use statevectore_simulator, will the result be better since it doesn't include any sampling error?

Parameter initialization and optimization of QAOA

- 3 replies

- last reply by JackSong Nov. 10, 2021

-

-

The answers to your questions are not simple. Let me try my best to answer, and hopefully could be of some help to you.

1. Why is the initial point set to [1.0, 1.0]? A more general way should be random value, correct? Should we bound the random value?

Just to be clear, the initial state is of QAOA is usually chosen as an equal superposition of all basis states, i.e.

$$

\ket{\phi_0} = (\frac{1}{2}(\ket{0} + \ket{1}))^{\otimes n},

$$

which means all possible solutions have equal probability at the beginning. Recently there is also a proposal of warm-starting QAOA where the initial state is prepared based on the classical solution for a continuous relaxation of QUBO. [1]The vector [1.0, 1.0] you mentioned corresponds to the initial guess of the parameters $(\beta, \gamma)$, which is related to the classical optimizer. Those parameters are rotation angles in the circuit. The rotation gates are periodic function so you don't need to bound them.

The initialization of these parameter is optimal to be based on your best guess. If no prior knowledge, you are right that a common practice is to choose random values as initial parameters. There are some discussion about different strategies of parameter initialization, such as heuristic strategy to reduce optimization run time [2].For example a good way to initialize the parameters is based on Troterized quantum annealing (TQA) protocol [3]. Another common practice is to initialize QAOA layer-wise which is also a greedy approach. [4]

2. Why does it use COBYLA optimizer? Which optimizer do people usually use for QAOA? I tried to use different optimizers and I got different fun and x and I don't know which is better...

COBYLAstands for constrained optimization by linear approximation. It works for constrained problems where the derivative of the objective function is not known, like QAOA. During an optimization iteration, an approximating linear programming problem is solved to obtain a candidate for the optimal solution. The candidate solution is evaluated using the original objective and constraint functions, yielding a new data point in the optimization space.COBYLAis not the only choice of method. You may choose other classical optimizer. As shown in the experiment of [5], SHGO, COBYLA and BOBYQA perform much better in terms of run-time, with SHGO being the fastest. In practice,Simultaneous Perturbation Stochastic Approximation (SPSA) algorithm is considered to be the best optimizer, because it deals with noise and requires less number of evaluations on the actual QPU backend. Running quantum circuit on the QPU is more expensive than classical computation resources. Spall's original paper for SPSA is the best place to learn. Implementation of SPSATo decide which one is better, you should decide whether you are more concerned about the optimization result or the run time or your own metric. For optimization result, the expectation of the cost Hamiltonian can tell how good the result is, the smaller the better. In this example, it should correspond to

funin the result. In the meantime, the variance of the final result should also be considered.3. How can we know if we obtain good enough or optimal parameters rather than stuck in barrean plateau?

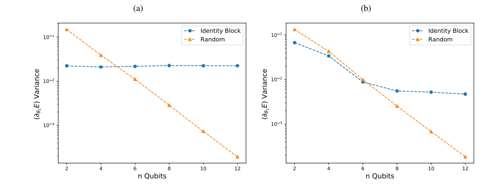

This is a complex problem troubling researchers in the field of quantum machine learning. There is discussion about using a different initialization strategy to resolve this problem. This technique involves randomly selecting some of the initial parameter values, then choosing the remaining values so that the circuit is a sequence of shallow blocks that each evaluates to the identity [6].

4. In get_expectation , it uses qasm_simulator. If we use statevectore_simulator, will the result be better since it doesn't include any sampling error?

Since

statevector_similatorgives ideal results of a measurement, namely abs square of the amplitudes, usingstatevector_simulatorwill be more "accurate" in the sense that it does not have any noise.Here is a good post discussing the difference between

qasm_simulatorandstatevector_simulator: entangledquery.com/t/what-is-the-difference-between-the-statevector-simulator-and-qasm-simulator-in-qiskit/11/#post-58Reference

[1] Daniel J. Egger, Jakub Mareček, and Stefan Woerner, Warm-starting quantum optimization

[2] Zhou, Leo, et al. "Quantum approximate optimization algorithm: Performance, mechanism, and implementation on near-term devices." Physical Review X 10.2 (2020): 021067.

[3] Stefan H. Sack and Maksym Serbyn,Quantum annealing initialization of the quantum approximate optimization algorithm

[4] Training Saturation in Layerwise Quantum Approximate Optimisation

[5] Mesman, Koen, Zaid Al-Ars, and Matthias Möller. "QPack: Quantum Approximate Optimization Algorithms as universal benchmark for quantum computers." arXiv preprint arXiv:2103.17193 (2021).

[6] Grant, Edward, et al. "An initialization strategy for addressing barren plateaus in parametrized quantum circuits." Quantum 3 (2019): 214. -

Thanks a lot for your reply! It helps me a lot!

-

- For the parameter initialization, the qiskit-textbook set it to be $[\beta, \gamma] = [1.0, 1.0]$. I guess this fixed initialization is mainly for reproducible optimization results. Just like fixing the random seed when sharing your code with someone else, they want whoever use the notebook get the same results.

- As @JXW discussed, IBMQ community highly recommend

SPSA(stochastic process involved) as the best optimizer runing on a real quantum harware.SPSAdoes deal with certain noise level and resource reduction (measurement) at the cost of accuracy. However, this QAOA notebook is running on a simulator (assuming no incoherence and SPAM noise) where people cares more about accuracy. Here,COBYLAis good enough. - The quick answer is people usually run the same algorithm multiple times with different initializations + optimizers and compare the reults pool. If they consistently converge to a single solution, we are in good shape.